Decoding ChatGPT: The Ultimate Guide to LLM Mastery

Discover the power of Large Language Models in 'Decoding ChatGPT': a guide to mastering prompt engineering, understanding capabilities, and practical applications.

Large Language Models (LLMs) have emerged as a transformative force in artificial intelligence, fundamentally changing how we interact with machines and understand language processing. These sophisticated computer programs, capable of generating and analyzing human-quality text, have revolutionized various aspects of Natural Language Processing (NLP). LLMs have become indispensable tools for search, speech-to-text, sentiment analysis, and text summarization by learning the intricate relationships between billions of words and phrases.

The impressive capabilities of LLMs are rooted in deep learning algorithms, specifically transformer models. These complex models can recognize patterns, translate languages, predict word sequences, and even generate creative text formats, all thanks to their vast knowledge gleaned from massive datasets. Their true power lies in their ability to process and analyze enormous amounts of unlabeled text data having billions of parameters. This has made LLMs pivotal in driving diverse AI innovations, from predicting a sentence’s next word to finding offensive content.

Some real-world applications across diverse fields:

- 🚀 Enhancing Communication and Collaboration: Chatbots and Virtual Assistants, Real-time Translation, Personalized Content Curation

- 📈 Boosting Creativity and Productivity: Content Creation, Code Generation and Debugging, Scientific Discovery and Research.

- 📚 Transforming Education and Learning: Personalized Learning, Automated Grading and Feedback, Language Learning and Practice.

Exploring the LLM Toolbox: Understanding Models and Their Uses

🌐 Large Language Models (LLMs) are constantly evolving, with various models available, each possessing unique architectural features and excelling in specific tasks. To make the most of this powerful technology, it is essential to understand its internal workings and nuanced strengths. This exploration delves into two common LLM types: Base and Instruction-tuned.

Base LLMs: The Foundation of Natural Language Understanding

🏗️ Base LLMs form the bedrock of the LLM ecosystem. Trained on massive, general-purpose datasets, they excel in understanding and generating human-quality text across various tasks.

Key Characteristics

- 🧠 Transformer-based architecture: Base LLMs often employ transformer-based neural networks, known for their exceptional ability to process and encode relationships between words and text sequences.

- 📚 Extensive training: They undergo rigorous training on vast text corpora encompassing diverse genres and topics. This extensive exposure to language equips them with a comprehensive knowledge base and a deep understanding of natural language patterns.

- 🌍 Generalist capabilities: Base LLMs are not fine-tuned for specific tasks. Instead, they possess a broad range of abilities that can be applied to various language-related challenges.

Common Applications

- ❓ Question Answering: They can accurately answer open-ended questions, drawing information from their extensive knowledge base.

- 📑 Text Summarization: They can condense lengthy documents into concise summaries, capturing key points and reducing redundancy.

- 📝 Creative Language Generation: They can compose poems, scripts, marketing copy, and other creative text formats, demonstrating a remarkable ability to mimic and experiment with language.

Instruction-Tuned LLMs: Sharpening the Focus for Specialized Domains

🎯 Instruction-tuned LLMs represent a focused evolution of base LLMs tailored for exceptional performance in specific domains or tasks.

Key Characteristics

- 📖 Additional training: They undergo training on smaller, task-specific datasets, enhancing their ability to solve problems within a particular domain.

- 🔍 Specialist expertise: They develop expert-level understanding and performance in their designated focus areas.

Common Applications

- 🌐 Machine Translation: They can translate languages with impressive accuracy and fluency, capturing nuances and cultural contexts.

- 🧩 Code Generation and Optimization: They can analyze code, generate code snippets, suggest improvements, and even automate repetitive coding tasks, streamlining development processes.

- 🔬 Domain-Specific Analysis and Inference: They can perform tasks within specialized domains such as medical diagnosis support, financial risk assessment, or scientific literature analysis, offering valuable insights to professionals.

Prompt & Prompt Engineering 101: Leveraging the Power of Instruction

What is a Prompt? 🤔

In language models, a prompt is a question or instruction given to the model to generate an output. It serves as the starting point for the model's response, guiding it to produce relevant and useful information. Prompts can be simple commands, complex questions, or even a series of statements to set the context.

What is Prompt Engineering? 🛠️

Prompt Engineering is the process of designing, refining and optimizing the initial instructions or questions given to a language model to generate desired outputs. It requires a deep understanding of the model's capabilities; the process often involves a trial-and-error approach, where the prompt is continually adjusted and tested until the output meets the desired quality. The goal is to craft a prompt that yields the most accurate, relevant, and valuable response from the model, thereby enhancing the overall performance and utility of the language model in real-world applications.

Prompt engineering can help in addressing the issue of bias and misinformation in generative AI language models. Engineers can guide models to generate accurate, balanced, and fact-based responses by carefully crafting prompts. This involves understanding the nuances of language and the model's training data to mitigate biases and encourage the model to consider multiple perspectives. Additionally, prompt engineering can enhance the model's ability to verify information against reliable sources, reducing the spread of misinformation.

What are the different types of Prompting Techniques? 🧐

- Zero-Shot Prompting: The model receives a prompt without additional context and generates a response based on pre-training knowledge. E.g., "Write a poem about a lonely robot."

- Few-Shot Prompting: The model has a few examples (usually 1-3) before the prompt to guide its understanding and response. E.g., "Given the following examples, generate a polite email response to a customer inquiry about a delayed shipment."

- Chain-of-Thought (CoT) Prompting: The model's previous outputs are fed back as inputs, creating a chain of thoughts or a continuous dialogue. E.g., "Write a story about a detective solving a mystery. > The detective entered the dimly lit room. > The smell of dust and old books filled the air. > [Continue the story]"

- Self-Consistency Generated Knowledge Prompting: Questions or prompts are generated from the model's outputs and fed back as input to enhance consistency and coherence. E.g., "Write a summary of the article on climate change. > [Model generates summary] > What are the key takeaways from this summary? > [Model generates key takeaways]"

- Tree of Thoughts (ToT) Prompting: The model explores multiple branches or paths based on the initial prompt, generating diverse outcomes or solutions. E.g., "List three possible ways to improve customer satisfaction in a retail store."

- Retrieval Augmented Generation (RAG): The model retrieves relevant information from a large dataset during the generation process, enriching its knowledge base and improving response quality. E.g., "Write a blog post about the history of artificial intelligence, citing relevant research papers and historical events."

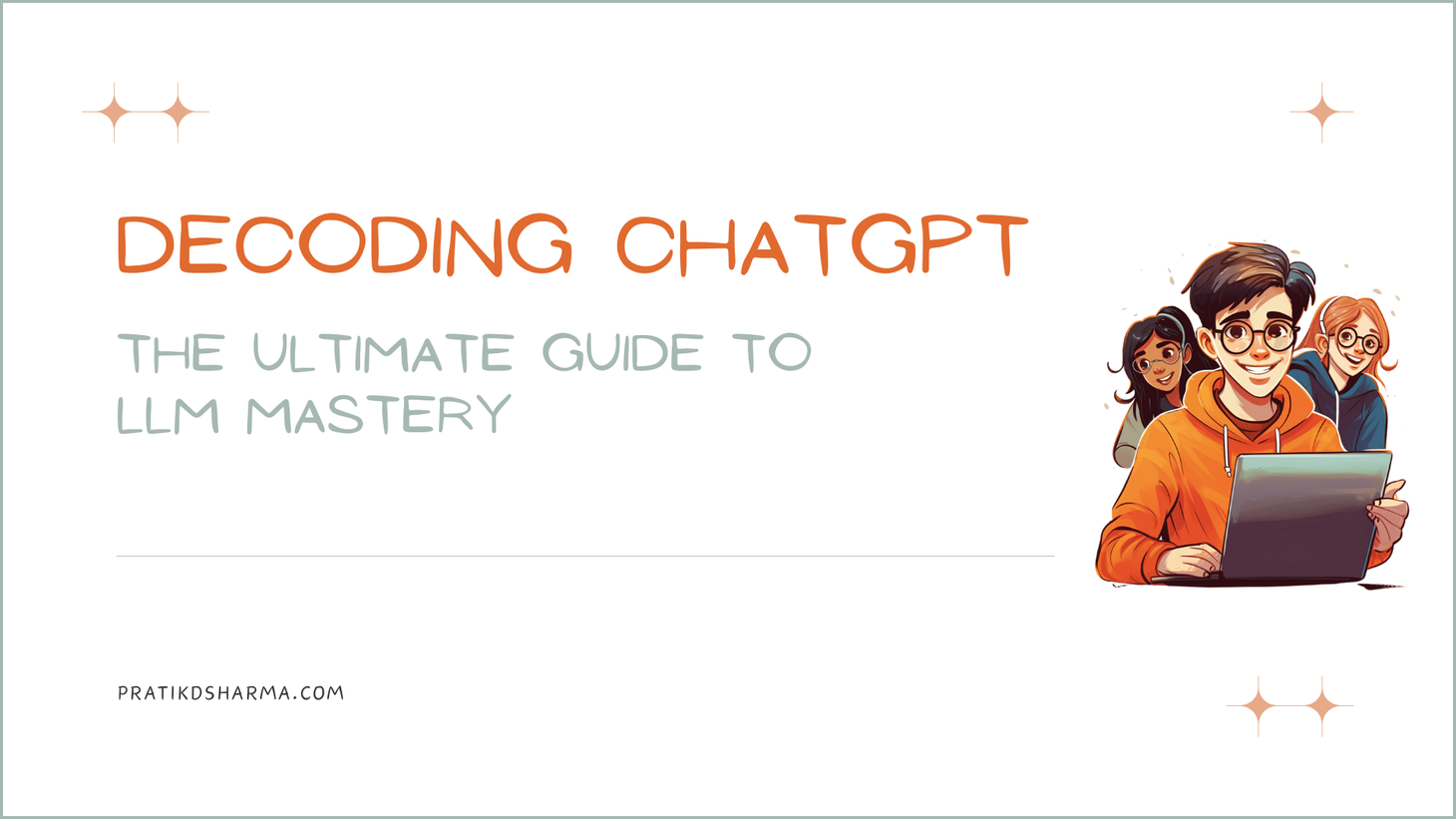

The Principles of Prompt Engineering: Commanding the LLM Language

🎨 Unlocking ChatGPT and LLMs' true potential lies in their capabilities and our ability to guide them precisely. This is where the art of the prompt comes in – a skill honed through the "ChatGPT Prompt Engineering for Developers" course offered by DeepLearning.ai, led by Andrew Ng and Isa Fulford (OpenAI).

Ready to put your artistic touch into practice, similar to what you would learn in a prompt engineering course? We'll share code examples using Python and the OpenAI API to guide you through the process. But don't worry if you're not a Python Picasso! Most of these examples can also be explored directly in the ChatGPT browser window, providing a user-friendly canvas for experimentation. Choose your environment, grab your brushes (prompts!), and let's dive into the world of LLM creativity!

Below is the code block we'll use to connect to OpenAI API.

Crafting Effective Prompts: Being Clear and Specific

📝 Clear and concise prompts are the foundation of success. Here's how to refine yours:

Tactic 1: Delimiters as Signposts

Use clear visual cues like triple quotes, backticks, or dashes to differentiate your prompt from the desired output. This helps the LLM understand where your instructions begin and end.

Tactic 2: Structured Output for Clarity

Specify the format you want the output to take. Do you need a bulleted list, JSON, or table output? Guiding the LLM's response helps it focus and deliver what you need.

Tactic 3: Checking Assumptions

Before asking the LLM to perform a task, ensure it has the necessary information. State the relevant context and check if its assumptions align with your expectations.

Tactic 4: Few-Shot Prompting

Give the LLM a taste of what you want. Provide examples of successful outputs for similar tasks before asking for the main one. This helps it understand your desired style and tone.

Time Considerations: Letting the LLM Think

🕒 Like any creative mind, LLMs need time to process information and formulate responses. Be patient and consider the following tactics.

Tactic 1: Break it Down Step by Step

Specify the steps or stages of completing the task. This provides a roadmap for the LLM and helps it structure its response effectively.

Tactic 2: Explore Before Concluding

Encourage the LLM to work out its solution before rushing to a conclusion. This allows it to consider different perspectives and potentially arrive at a more nuanced or creative outcome.

Acknowledging Model Limitations: Navigating the LLM Landscape

While LLMs are powerful, they are still under development and have inherent limitations. Be aware of these:

- Hallucinations: Sometimes, LLMs might generate plausible outputs but are factually incorrect. This is called hallucination. To counter this, always verify the information provided against reliable sources.

- Reducing Hallucinations: Train the LLM on relevant and accurate data to minimize reliance on internal biases and hallucinations. You encourage it to build its responses on solid ground by providing the right foundation.

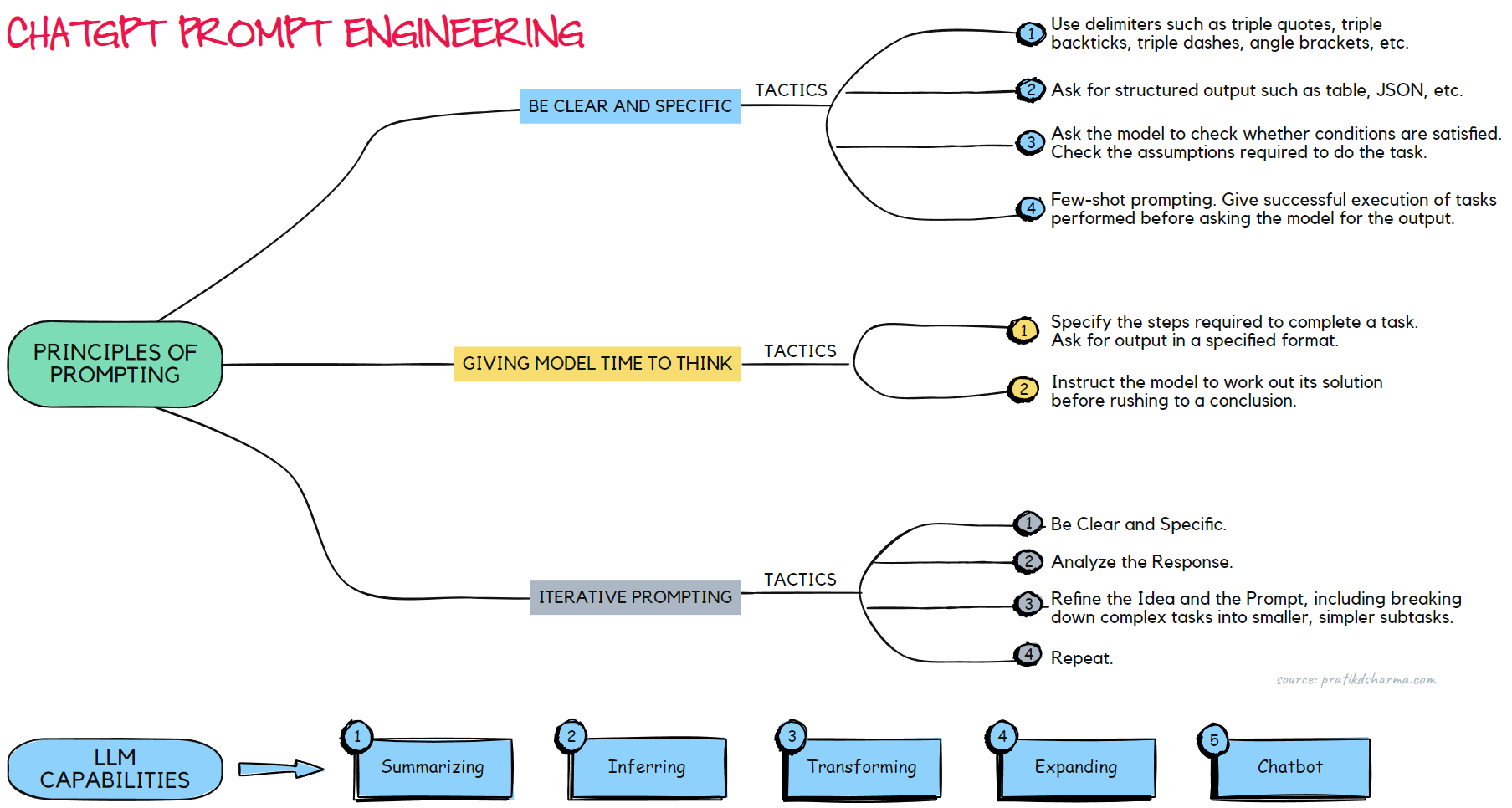

Iterative Prompting: Refining the Dialogue for Optimal Results

🔄 The journey to the perfect prompt often involves trial and error.

Embrace the iterative approach.

- Step 1: Be Clear and Specific. Start with a clear and concise prompt outlining your desired outcome.

- Step 2: Analyze the Response. If the output doesn't meet your expectations, analyze why. Was the prompt unclear? Did the LLM lack relevant information?

- Step 3: Refine the Idea and the Prompt. Based on your analysis, refine your original idea and rewrite the prompt, incorporating the learnings from the previous attempt. Also, consider breaking down a complex task into smaller, simpler subtasks.

- Step 4: Repeat. Iterate until you achieve the desired outcome. Remember, the perfect prompt is often a product of continuous refinement and collaboration between you and the LLM.

Example

Imagine you want GPT to generate a marketing product description from a fact sheet. The first attempt might be too long and focus on irrelevant details.

- Iteration 1: Limit the word count to 50. This might still not yield the desired result, as the LLM might prioritize brevity over accuracy.

- Iteration 2: Ask GPT to extract and organize information in a table format. Specify the dimensions you want in the output. This provides a more structured framework for the LLM to work with.

Andrew NG aptly summarizes the art of prompting:

By embracing this iterative approach and understanding the nuances of LLM capabilities, you can unlock their full potential and turn them into powerful tools for creativity, productivity, and intellectual exploration.

Unleashing the Powerhouse: LLMs Can Summarize, Infer, Transform, Expand

Large Language Models (LLMs) are more than chatbots or witty text generators. They are versatile powerhouses capable of manipulating information remarkably, opening doors to creative and practical applications. Let us explore some of their key capabilities:

Summarizing Information: Condensing the Essence

Are you tired of wading through mountains of text? LLMs can efficiently summarize documents, extracting key points while preserving the core meaning. Imagine browsing through hundreds of product reviews.

📣 Prompt 1: General Overview. Summarize the general sentiment and main features mentioned in these product reviews.

🔍 Prompt 2: Specific Focus. Analyze the reviews specifically for comments on shipping and pricing.

📝 Prompt 3: Word Limit. Please give me a concise 30-word summary of these reviews, highlighting the pros and cons.

Inferring Context: Reading Between the Lines

🔍 LLMs go beyond literal meaning, leveraging their vast knowledge and understanding of language to infer context and sentiment. They can analyze:

- 🌟 Product Reviews: Identifying positive or negative sentiment, extracting key themes, and detecting sarcasm.

- 📰 News Articles: Understanding the underlying narrative, extracting information, and differentiating between objective reporting and biased language.

This ability to infer meaning has revolutionized tasks like sentiment analysis, offering a nuanced alternative to traditional, data-hungry methods. Instead of training separate models for every task, a single LLM can handle numerous analyses with just one prompt.

Transforming Data: Shaping and Shifting

🔄 LLMs are experts in transformation, seamlessly changing the form and function of information. They can:

- 🌐 Translate Languages: Communicate across barriers by instantly translating texts into your desired language, fostering global collaboration and understanding.

- ✒️ Correct Errors: Polish your writing with spell-checking and grammar correction tools, ensuring your communications are clear and professional.

- 📊 Convert Formats: Bridge the gap between different data formats. Input HTML code and get its JSON equivalent, streamlining workflows and simplifying data manipulation.

These transformative abilities make LLMs invaluable tools for writers, programmers, and anyone who works with diverse data formats.

Expanding Ideas: Sparking the Creative Flame

🔥 LLMs are not just information processors; they can be your creative collaborators. Need a spark to ignite your next writing project? Set the stage:

- 🚀 Prompt 1: "I'm writing an email about sustainable living tips. Generate five catchy subject lines."

- 📝 Prompt 2: "Give me three different starting sentences for an essay on artificial intelligence."

LLMs can also expand existing ideas, crafting longer outputs like poems, scripts, or blog posts. And by adjusting the "temperature" parameter, you can control the model's creative freedom.

Lower temperatures favor predictable outputs, while higher temperatures introduce randomness and surprise, potentially leading to more unexpected and imaginative results.

Unleashing the power of LLMs goes beyond passively consuming information. These versatile models can be actively woven into your workflow, helping you summarize, analyze, transform, and expand your ideas. Embrace the LLM revolution and discover the power to do more, create more, and understand more than ever.

Conclusion

In conclusion, our journey through the LLM landscape, with ChatGPT as our guide, has revealed a world brimming with potential. We have explored:

- 🔍 Prompts & Prompt Engineering: Prompts guide language models. Prompt engineering refines them for desired outputs. It helps models generate accurate, relevant info and reduces misinformation by guiding them to consider multiple perspectives and verify against trusted sources. Different techniques include zero-shot (no context), few-shot (few examples), chain-of-thought (progressive dialogue), and more.

- 🌟 The art of prompt engineering: Mastering precise prompts is the key to unlocking LLMs' creative and practical capabilities. Following Andrew Ng's insights in the ChatGPT Prompt Engineering for Developers course and employing effective tactics like delimiters and temperature control, we can transform LLMs into powerful tools for our endeavors.

- 🧩 Diverse LLM types: Base LLMs offer versatile language understanding, while Instruction-tuned LLMs excel in specific domains. Understanding these distinctions empowers us to choose the right tool for the task.

- 🚀 Remarkable capabilities: LLMs' abilities are transformative, from summarizing information and translating languages to generating creative content and code. By embracing their potential, we can boost our productivity, enhance our creativity, and revolutionize workflows across various fields.

However, as we unlock the power of LLMs, it is crucial to remember the importance of responsible use. We must be mindful of potential biases, factual inaccuracies, and ethical considerations. Always verify information against reliable sources, strive for factual accuracy, and avoid perpetuating harmful stereotypes. By prioritizing responsible use and ethical engagement, we can ensure that LLMs empower humanity for the better.

The future of the LLM landscape is exciting, and with responsible hands, its potential is limitless. Let us continue to explore, learn, and create together with these powerful language models as our collaborators. 🌍

Fuel your AI curiosity! Get notified as soon as we publish new articles like this one, packed with the latest LLM news, practical applications, and expert insights. Subscribe to the newsletter and keep your AI knowledge tank topped up. 🚀📚

Stay Informed, Stay Inspired.

Join the newsletter to receive the latest updates in your inbox.

🚫 No spam. Unsubscribe anytime .